About Gabe Rudy

Meet Gabe Rudy, GHI’s Vice President of Product and Engineering and team member since 2002. Gabe thrives in the dynamic and fast-changing field of bioinformatics and genetic analysis. Leading a killer team of Computer Scientists and Statisticians in building powerful products and providing world-class support, Gabe puts his passion into enabling Golden Helix’s customers to accelerate their research. When not reading or blogging, Gabe enjoys the outdoor Montana lifestyle. But most importantly, Gabe truly loves spending time with his sons, daughter, and wife. Follow Gabe on Twitter @gabeinformatics.

This last week I had the pleasure of attending the fourth annual Clinical Genome Conference (TCGC) in Japantown, San Francisco and kicking off the conference by teaching a short course on Personal Genomics Variant Analysis and Interpretation. Some highlights of the conference from my perspective: Talking about clinical genomics is no longer a wonder-fest of individual case studies, but a… Read more »

Yes, I said it. “Them be fighting words” you may say. Well, it’s worth putting a stake in the ground when you have worked hard to have a claim worth staking. We have explored the landscape, surveyed the ravines and dangerous cliffs, laboriously removed the boulders and even dynamited a few tree stumps. Stake planted. Ok, so now I’m going… Read more »

As VarSeq has been evaluated and chosen by more and more clinical labs, I have come to respect how unique each lab’s analytical use cases are. Different labs may specialize in cancer therapy management, specific hereditary disorders, focused gene panels or whole exomes. Some may expect to spend just minutes validating the analytics and the presence or absence of well-characterized… Read more »

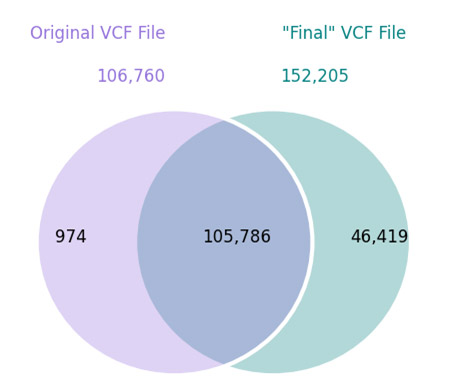

In a previous blog post, I demonstrated using VarSeq to directly analyze the whole genomes of 17 supercentenarians. Since then, I have been working with the variant set from these long-lived genomes to prepare a public data track useful for annotation and filtering. Well, we just published the track last week, and I’m excited to share some of the details… Read more »

If you haven’t been closely watching the twittersphere or other headline sources of the genetics community, you may have missed the recent chatter about the whole genome sequencing of 17 supercentenarians (people who live beyond 110 years). While genetics only explains 20-30% of the longevity of those with average life-spans, it turns out there is a number of good reasons… Read more »

To say the announcement of Dan MacArthur’s group’s release of the Exome Aggregation Consortium (ExAC) data was highly anticipated at ASHG 2014 would be an understatement. Basically, there were two types of talks at ASHG. Those that proceeded the official ExAC release talk and referred to it, and those that followed the talk and referred to it. Why is this… Read more »

I’m sitting in the Smithsonian Air and Space Museum basking in the incredible product of human innovation and the hard work of countless engineers. My volunteer tour guide started us off at the Wright brother’s fliers and made a point of saying it was only 65 years from lift off at Kitty Hawk to the landing of a man on the moon…. Read more »

You probably haven’t spent much time thinking about how we represent genes in a genomic reference sequence context. And by genes, I really mean transcripts since genes are just a collection of transcripts that produce the same product. But in fact, there is more complexity here than you ever really wanted to know about. Andrew Jesaitis covered some of this… Read more »

When researchers realized they needed a way to report genetic variants in scientific literature using a consistent format, the Human Genome Variation Society (HGVS) mutation nomenclature was developed and quickly became the standard method for describing sequence variations. Increasingly, HGVS nomenclature is being used to describe variants in genetic variant databases as well. There are some practical issues that researchers… Read more »

I’m a believer in the signal. Whole genomes and exomes have lots of signal. Man, is it cool to look at a pile-up and see a mutation as clear as day that you arrived at after filtering through hundreds of thousands or even millions of candidates. When these signals sit right in the genomic “sweet spot” of mappable regions with… Read more »

In preparation for a webcast I’ll be giving on Wednesday on my own exome, I’ve been spending more time with variant callers and the myriad of false-positives one has to wade through to get to interesting, or potentially significant, variants. So recently, I was happy to see a message in my inbox from the 23andMe exome team saying they had… Read more »

After my latest blog post, Jeffrey Rosenfeld reached out to me. Jeff is a member of the Analysis Group of the 1000 Genomes Project and shared some fascinating insights that I got permission to share here: Hi Gabe, I saw your great blog post about the problems in the lack of overlap between Complete Genomics and 1000 Genomes data. I… Read more »

Today I ran into an interesting fact about how a prolifically used catalog of population controls classifies African Americans with potential impacts on research outcomes. The 1000 Genomes Project is arguably our best common set of controls used in genomic studies. They recently finished what was termed as “Phase 1” of the project, and they have been releasing full sets… Read more »

Type 2 Diabetes, Rheumatoid Arthritis, Obesity, Chrohn’s Diseases and Coronary Heart Disease are examples of common, chronic diseases that have a significant genetic component. It should be no surprise that these diseases have been the target of much genetic research. Yet over the past decade, the tools of our research efforts have failed to unravel the complete biological architecture of… Read more »

In a series of previous blog posts, I gave an overview of Next Generation Sequencing trends and technologies. In Part Two of the three part series, the range of steps and programs used in the bioinfomatics of NGS data was defined as primary, secondary and tertiary analysis. In Part Three I went into more details on the needs and workflows… Read more »

A GPU can produce an enormous boost in performance for many scientific computing applications. Since we announced the availability of SNP & Variation Suite’s incorporation of GPUs to dramatically speed up copy number segmentation, we’ve received numerous inquiries on recommendations for what GPU to purchase. Unfortunately the technical terminology and choices can be a bit confusing. In this article I… Read more »

The advances in DNA sequencing are another magnificent technological revolution that we’re all excited to be a part of. Similar to how the technology of microprocessors enabled the personalization of computers, or how the new paradigms of web 2.0 redefined how we use the internet, high-throughput sequencing machines are defining and driving a new era of biology. Biologists, geneticists, clinicians,… Read more »

When you think about the cost of doing genetic research, it’s no secret that the complexity of bioinformatics has been making data analysis a larger and larger portion of the total cost of a given project or study. With next-gen sequencing data, this reality is rapidly setting in. In fact, if it hasn’t already, it’s been commonly suggested that the… Read more »

If you have had any experience with Golden Helix, you know we are not a company to shy away from a challenge. We helped pioneer the uncharted territory of copy number analysis with our optimal segmenting algorithm, and we recently handcrafted a version that runs on graphical processing units that you can install in your desktop. So it’s probably no… Read more »

Why should a genetic researcher care about the latest in video gaming technology? The answer is video graphics cards or Graphics Processing Units (GPUs). For certain computational tasks, a single GPU can perform as well as an entire cluster of CPUs for only a fraction of the cost. And because video gaming has grown into a highly competitive multi-billion dollar… Read more »